Spotit Red Team

At Spotit we run red team engagements for customers and internally on the company. These can include a penetration test against infrastructure and assets, physical breaching, social engineering, and phishing campaigns. A recent targeted phishing campaign by our red team, utilizing the device code phishing technique, resulted in the compromise of a user’s AzureAD account, including access to all Teams messages, e-mail, SharePoint, and internal applications.

The device code phishing technique was perhaps first discussed in a blog post on o365blog.com by Nestori Syynimaa (@DrAzureAD) dated 13th October 2020.

Device code phishing exploits the device authorization grant (RFC8628) flow in the Microsoft identity platform to allow an attacker’s device or application access to the target user’s account or system.

Tools Used

Using a combination of AAD Internals, TokenTactics, and MSGraph API calls, the red team was able to generate a device code, monitor when the device code was input on the Microsoft site, and gain:

• an access token for ONE specific resource (e.g. Outlook, Teams, Sharepoint, …) which is valid for about 60 minutes, and

• a refresh token that can be used to request a new access token for ANY resource, which is valid for 90 DAYS.

Target in Sight

TL;DR: red team got in direct contact with someone at the target company, sent them a Microsoft 'devicelogin' URL and an auth code disguised as a Teams meeting invite, staff member fell for it > complete compromise.

Our highly skilled red team built a plan to target a staff member who would presumably have lots of access. Someone in Accounts or Sales ideally. The red would send a message using the target company, requesting help with an IT Security Assessment. To do this the red team devised a plan to pose as an employee of a company in the same country operating in IT.

The red team perused LinkedIn and found a suitable person to spoof.

It’s easy to find and filter employees on LinkedIn

The red team chose a name and a role of an employee at a similar company, then created a gmail account with that person’s name.

Then an e-mail was written up and sent to the sales inbox at the target company. No response was received so the same message was then submitted to the target company’s contact form on their website, and a reply was received.

After some discussion and seemingly no suspicion, an agreement was made to have a Teams meeting urgently.

There is where the red team generated a device code using a VPN in the target’s country, and the payload was sent.

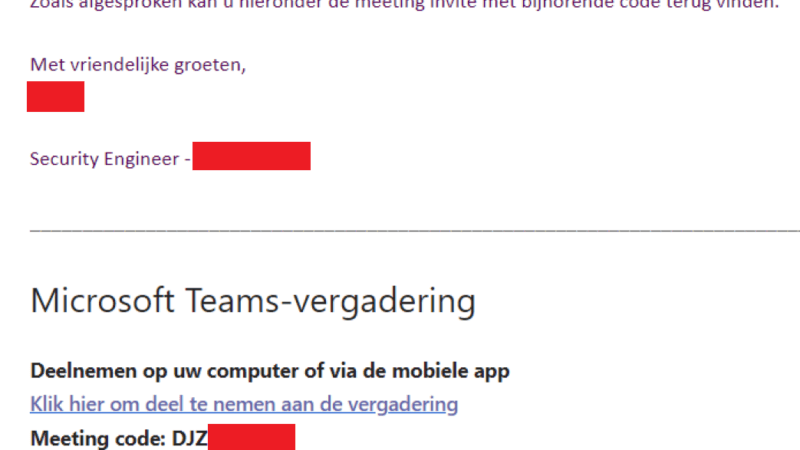

‘Meeting code’ is actually a device code

The meeting URL actually pointed to:

https://www.microsoft.com/devicelogin?meetup-join/19%3ameeting_NjFmMWI4MWEtYTI1My0…

Two things are erroneous about this URL:

- A real Teams meeting URL should be pointing to “https://teams.microsoft.com/…”

- Everything after “?” is added to make the URL look more convincing

This is the crucial part of the phishing attack. When visiting “https://www.microsoft.com/devicelogin”, the user is redirected to “https://login.microsoftonline.com/common/oauth2/deviceauth”. Note that both are legitimate websites from Microsoft.

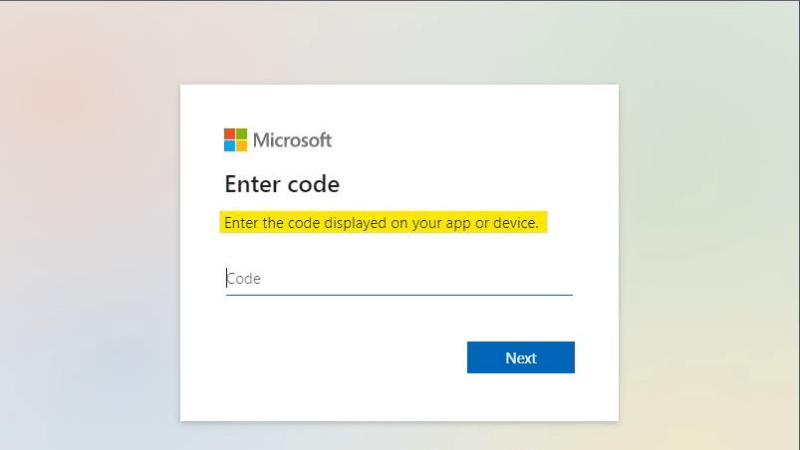

Device code authentication page

The page asks the user to enter a code. If the victim is already logged in to their Microsoft account, submitting the attacker-generated device code will provide the attacker with a token that has access to the resources of the organization as the victim user.

(To summarize: After entering the code, the attacker has access to the account of the victim)

The caveat is:

• After generating a code, it is only valid for 15 minutes.

• Entering the code will show an additional warning message to the user: “You are logging in to another device in <attacker_country>. Are you sure you want to do this?”

Both of these issues were solved by the social engineering approach, introducing urgency by only sending the meeting invitation right before the meeting started. This made sure that the code was still valid at the time of entering it, but also that the user might not pay to much attention to the warning messages.

How to Avoid

We thoroughly recommend that as part of general Phishing Awareness training, staff are trained to always check that URL’s are valid for the context of the conversation. Too many training programs simply advise staff to check for “https” and maybe that the domain is a valid domain for what they’re expecting. This technique shows that legitimate Microsoft applications and workflows can be abused by attackers.

Staff must always check the path of the URL (e.g. after the microsoft.com/) – “devicelogin“ should have been a dead giveaway here.

Visibility

At the moment, none of the Microsoft tooling (Defender, AzureAD, O365 logging etc.) generates alerts that a new device code was authorized by a user.

If the attacker accesses resources from another country then an ‘Impossible Travel’ alert may come from tooling. This is easily negated by using public VPN’s in target countries.

Custom alerts could potentially be created by detecting users visiting the ‘devicelogin’ URL or the ‘MIP’ flow, however that still does not provide any information on the attacker.

Conclusion

The device code phishing technique can be particularly damaging given that:

- There’s no first party alerting

- The payload is input in a legitimate Microsoft domain

- The tokens given can create persistence for 90 days

- The tokens give access to everything

Please consider updating Phishing Awareness training with the tips above, and if you devise a method to generate valuable alerting then implement it immediately.

Further Reading

https://o365blog.com/post/phishing/

https://0xboku.com/2021/07/12/ArtOfDeviceCodePhish.html

https://docs.microsoft.com/en-us/azure/active-directory/develop/